Feeling for Images Created by Generative Artificial Intelligence

Nicolas Esposito, Simon Autard, and Sawsane Ennebati (August 2023)

Short research report from the laboratory ErgoDesign Lutin-Gobelins (Gobelins Paris)

Introduction

In the current context of increasing numbers of tools for creating images using generative artificial intelligence (AI), such as Midjourney, Dall-E, or Stable Diffusion (Rombach et al., 2022), many questions are being asked, particularly about how they are perceived (Ragot et al., 2020). With this in mind, we conducted an experiment on how people feel when confronted with this type of image. We have already worked on the subject of feelings when faced with different types of image. This time, we are doing it with a pilot experiment that could give rise to more in-depth work, particularly in view of future advances in this field.

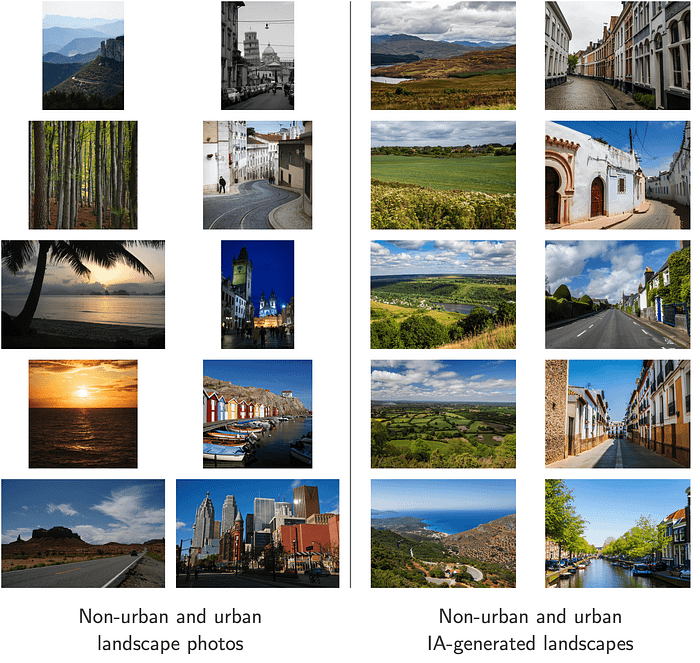

For this pilot experiment, we compared the feelings of twenty participants when faced with twenty landscapes, half of which were photographs, the other half having been generated by AI. Each type of image was represented by five non-urban and five urban landscapes (voir figure 1).

We wondered whether we might find differences in feeling and differences in success in identifying the type of image:

- between the two types of images (photos and AI-generated images),

- between the two types of images as perceived,

- between AI-generated images according to their level of realism,

- between AI-generated images according to the environment (urban or not),

- according to the profile of the participants (more or less expert in the field).

This has led us to formulate ten hypotheses:

- the feeling would be more positive with photos,

- success in identifying the type of image should be similar for both types,

- the feeling would be more positive with images perceived as photos,

- success should be similar for both types of images as perceived,

- feelings about AI-generated images should correlate with their realism,

- failure with AI-generated images should correlate with realism,

- feeling towards AI-generated images should be more negative when it comes to urban landscapes (less realistic in our set of images),

- for AI-generated images, success should be greater with urban landscapes than with non-urban landscapes (the former being less realistic),

- the most expert participants would feel more negative about AI-generated images (seeing them more as such),

- the most expert participants would be more successful with AI-generated images.

Methodology

The experiment was carried out with twenty participants with varying levels of domain expertise (image in general and AI-generated images). Each participant viewed the twenty images in Figure 1 in random order. The participants knew they were a mixture of photos and AI-generated images. For each image, they were asked how they felt about it (from 0 for very bad to 4 for very good) and their estimation of the type of image (from 0 for not at all AI-generated to 4 for completely AI-generated), as well as a few words to explain their choices. At the end, there was an open-ended question on overall feelings about the images as a whole, and another on feelings about the images recognized as AI-generated.

Photo selection and image generation were carried out with the following constraints in mind: no known images, no close subjects in the foreground (which could make estimation too easy), images that were easy to understand and did not a priori generate strong emotions. In addition, the AI-generated images had to represent the current level of current tools (we chose Firefly), and they had to be easy to identify as such.

Results

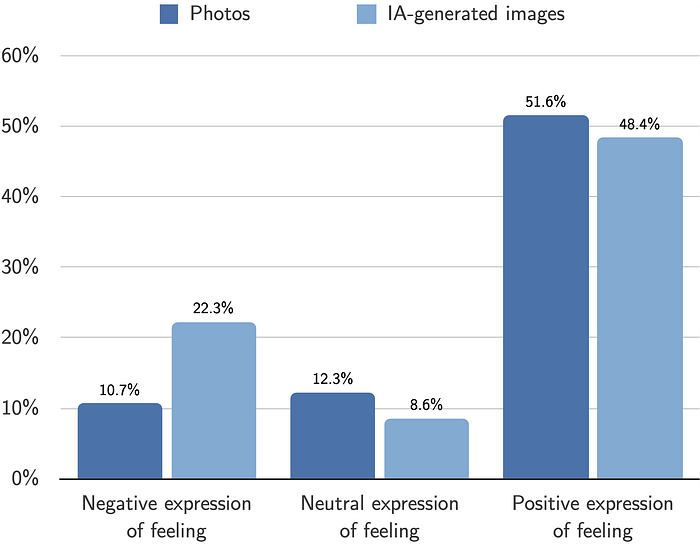

As we had envisaged, feedback was more positive with photos than with AI-generated images (see figure 2). And success in identifying the type of image was similar for both types. The averages are 2.51 (photos) and 2.66 (AI-generated images) out of 4, and we thought they would be higher, so that photo type identification would be easier. By distinguishing between images according to how they were perceived, we obtained the same results for feeling and success, but even more markedly.

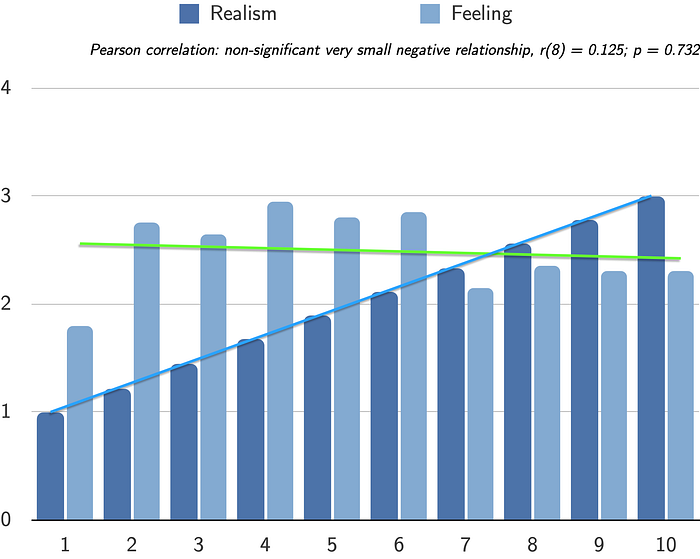

We did not confirm a correlation between feeling towards AI-generated images and their realism. But we did confirm the correlation between failure when faced with AI-generated images and their realism (see figure 3). The more realistic the images, the less they were identified as AI-generated. With regard to the distinction between urban and non-urban landscapes, we didn’t measure any significant differences for feeling and success, although we can note a greater success with urban landscapes (less realistic for our AI-generated images).

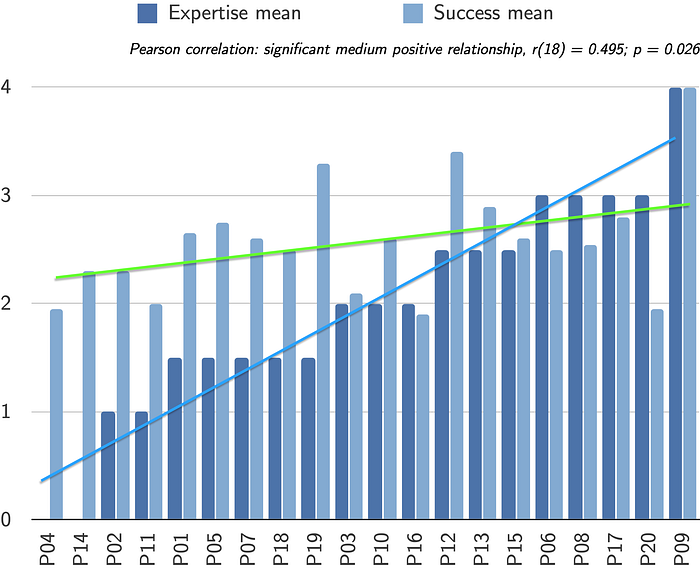

Finally, we did not confirm a link between participants’ level of expertise and how they felt about AI-generated images. But we did confirm a correlation between participants’ level of expertise and success (see figure 4). The higher the participants’ level of expertise, the more successful they were in identifying the type of images.

Six of our ten hypotheses were validated in this experiment. Overall, we measured less positive feelings towards AI-generated images than towards photos. This was also reflected in the expression of feelings. After categorizing participant’ feedback (into negative, positive and neutral), we found that the percentage of negative feedback was twice as high for AI-generated images as for photos: 22.3% and 10.7% respectively (see figure 5).

Discussion

The results of this pilot experiment lead us to consider more in-depth studies, based on the following points in particular. We could work with images that are more easily identifiable (images generated by AI or not) or, on the contrary, less easily identifiable. We could form groups of participants according to specific levels of expertise. Similarly, we could create subsets of images according to specific levels of realism. We could compare different generation tools. We could also use other types of images, not just landscapes and not just photos, potentially drawings or paintings, figurative (Gu & Li, 2022) or abstract (Israfilzade, 2020). Finally, we could use eye tracking to work on visual attention (Rousselet & Fabre-Thorpe, 2003) and we could take more time with participants to get more in-depth feedback, particularly on how they view these images.

Conclusion

From the results of this pilot experiment, with the photos used (half of which were generated by Firefly), we note in particular that:

- feedback was more positive with the photos than with the AI-generated images (even more so when distinguishing between images according to how they were perceived),

- success in identifying the type of image was similar for both types (even more so when distinguishing between images according to how they were perceived),

- the more realistic the AI-generated images, the less they were identified as AI-generated,

- the higher the participants’ level of domain expertise, the better they were at identifying image type.

References

- Gu, L. & Li, Y. (2022). Who Made the Paintings: Artists or Artificial Intelligence? The Effects of Identity on Liking and Purchase Intention. Frontiers in Psychology, 13.

- Israfilzade, K. (2020). What’s in a Name? Experiment on the Aesthetic Judgments of Art Produced by Artificial Intelligence. Journal of Arts, 3(2), 143–158.

- Ragot, M., Martin, N. & Cojean, S. (2020). AI-Generated vs. Human Artworks. A Perception Bias Towards Artificial Intelligence? Extended Abstracts of the 2020 CHI Conference on Human Factors in Computing Systems, 1–10.

- Rombach, R., Blattmann, A., Lorenz, D., Esser, P. & Ommer, B. (2022). High-Resolution Image Synthesis With Latent Diffusion Models. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 10 684–10 695.

- Rousselet, G. A. & Fabre-Thorpe, M. (2003). Les mécanismes de l’attention visuelle. Psychologie française, 48(1), 29–44.